Why a Cybersecurity Founder Is Building Health tech, And Why That Combination Is Exactly Right

People ask me this question more than almost any other.

You spent years in cybersecurity. You built in fintech. Why are you in health tech now? Is this a pivot? Did something change?

The honest answer is: nothing changed. The industry changed. The problem I’ve always been interested in, how do you build systems that handle sensitive, consequential data at scale, without breaking the trust of the people that data belongs to, that problem exists everywhere. I just followed it to the place where it is most urgent and most unsolved.

That place is African healthcare.

What cybersecurity actually teaches you

Most people think of cybersecurity as a technical discipline. And it is, but the technical part is not the most important part.

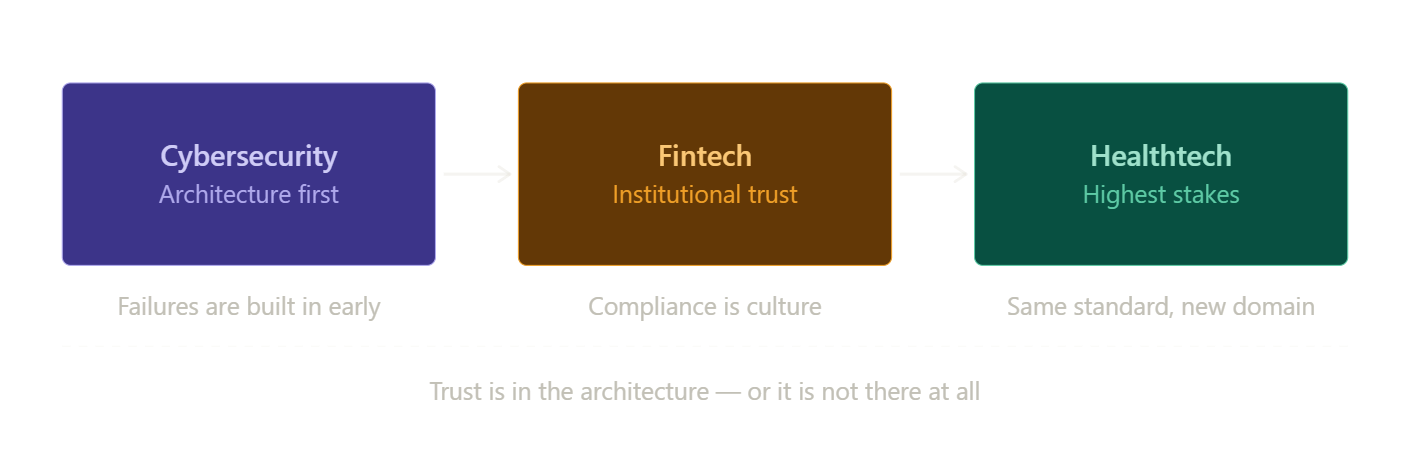

What cybersecurity actually teaches you is how to think about systems under adversarial conditions. You are not building for the best-case scenario. You are building for the moment someone is actively trying to break what you built, whether that someone is a bad actor from the outside, or a failure point you didn’t anticipate from the inside.

This changes how you design things fundamentally.

When I was working in cybersecurity, the thing I kept observing was that the companies that got it wrong, the ones that ended up breached, the ones that lost data they were entrusted to protect, almost never failed because someone outsmarted them technically. They failed because they made a structural decision early on that seemed fine at the time. A shortcut in the architecture. A compliance requirement treated as a checkbox rather than a principle. Security bolted on at the end because the budget ran out before they got to it.

The attack came later. But the vulnerability was built in from the beginning.

I carried that lesson with me into every venture after. You cannot secure a system that was not designed to be secure. You cannot make something trustworthy retrospectively. Trust is either in the architecture or it is not there at all.

What fintech confirmed

Fintech deepened the same understanding in a different context.

Financial data is sensitive in a way that most people understand immediately and personally. When you are building systems that touch people’s money, their savings, their transactions, their financial identity, you are operating with a level of consequence that does not allow for “we’ll fix it in the next sprint.”

What fintech added to the cybersecurity foundation was the regulatory dimension. Compliance in fintech is not optional, and it is not static. The frameworks evolve, the expectations shift, and the companies that treat compliance as a minimum bar rather than a design principle spend most of their time catching up. The companies that build compliance into their culture, that treat it as an expression of what they actually believe about how data should be handled, those are the ones that scale without crises.

I also learned something about trust that I don’t think you can learn without being close to financial services: institutions, real institutions, not startups playing institutional, extend trust to infrastructure, not to intentions. It does not matter how good your mission sounds in a pitch deck. If your data governance does not hold up to scrutiny, no serious health system, no serious hospital network, no serious government partner will sit across the table from you for long.

You earn institutional trust through institutional standards. That is the only way.

Why health data is the hardest version of this problem

Health data is, in my view, the most sensitive category of personal data that exists.

Financial data can be corrected. An account can be frozen, a transaction disputed, a credit record repaired. The consequences of financial data mismanagement are serious, but most of them are recoverable.

Health data carries a different weight. A person’s diagnosis, their medication history, their chronic conditions, this information can affect their employment, their insurance, their relationships, their safety in contexts that vary depending on where in the world they live. And unlike a compromised bank account, you cannot issue someone a new medical history.

The obligation that comes with health data is not just legal. It is ethical. It is, I would argue, one of the highest trust obligations that any organization can carry.

And yet health tech, especially in emerging markets, is full of ventures that are building fast and governance-light. Collecting data without clear frameworks for how it will be held, used, or protected. Optimizing for growth metrics in ways that treat data handling as a secondary concern.

This is not unique to Africa. But the stakes in the African context are particular, because the patients whose data is at stake are often in health systems with the least institutional protection, the least recourse, and the least historical experience of digital health infrastructure that was actually built for them and not extracted from them.

If you are going to ask those patients to trust a platform with their health data, the architecture has to be right. The governance has to be real. The standard has to be the highest available, not because a regulator is watching, but because the obligation is real whether anyone is watching or not.

Why this background is not a detour, it is the qualification

Here is what I want to say directly to anyone who has wondered whether my background is a mismatch for health tech:

The African healthcare system does not need another founder who learned health data governance after they started building. It needs founders who already understand what it means to be trusted with data that matters, and who built that understanding in fields where the consequences of getting it wrong were immediate and visible.

Cybersecurity and fintech are the hardest schools for data responsibility that exist outside of healthcare itself. I attended both of them before I started building Medlitics.

That means when we are designing the architecture for how Medlitics handles patient data, how it is stored, who can access it, what the governance framework looks like, how we think about security not as a feature but as a foundational design constraint, I am not learning these principles for the first time. I am applying them in a new domain, with the same non-negotiable standard.

What this means for Medlitics

Medlitics is building health analytics infrastructure for the African market. The work is about surfacing the data that has always existed in African health systems, in clinics, in patient records, in population patterns, and making it usable: for clinicians making decisions, for health systems understanding their patients, for the kind of analysis that leads to better outcomes at scale.

To do that work, we have to be trusted. Not just liked. Not just well-intentioned. Trusted, in the institutional sense, by the health systems and clinical partners we work with, and in the personal sense, by the patients whose data we are responsible for.

That trust has to be earned through standards. Not through marketing. Not through a mission statement. Through the way the system is actually built.

That is the only kind of trust that holds when something goes wrong, when there is a pressure to cut a corner, when there is a shortcut that would accelerate growth, when the architecture decision that seemed safe starts to show its limitations.

The answer to all of those moments is already in the foundation. Or it is not there at all.

Why now

The African healthtech space is significantly underinvested relative to the scale of the problem. That gap will close, not because someone decided it should, but because the economics and the need will force it.

The ventures that will define what African digital health looks like for the next generation are being built right now. The ones that will matter are not the ones moving fastest. They are the ones building with the right foundation, because in a sector this consequential, the shortcuts become the stories that end companies.

I am building Medlitics to be the infrastructure that serious health systems can trust. That means building it the way I learned to build anything that carries real consequences: architecture first, standards non-negotiable, governance real from day one.

The background is not a detour.

It is exactly why I am the right person to build this.

Next week: the one operating philosophy that runs through every company I have built, and why it matters more than any individual product decision. Subscribe so you don’t miss it.